Model design is one of the most important stages of the development of any agent-based model. Get the design wrong and you might find yourself tied up in knots, battling against the structure of the project you defined months before. Get it right and you’ll leave yourself a extensible platform to develop into, allowing yourself time to concentrate on refining your model structure.

During the development of an agent-based model during my PhD, I found that while many authors write about the application and implementation of ABM, each model is approached on a seemingly ad-hoc basis, with little reference to a unifying framework or starting point. So I decided to develop my own.

The framework is divided into four sections, intended to be approached in the order in which they are presented. At each section, further subsections demand questions of the modeller about how they might approach each facet of the design. Only once the modeller has developed a strong understanding of each element (including deciding whether the factors are, in fact, relevant) of the design should they proceed with the development of the ABM. The framework is intended to be generic, applicable across disciplines and language neutral (although elements naturally align with the structure of some of the ABM tools).

The framework is outlined below. I’d be very interested to receive any feedback, positive or negative, or (even better) news of anyone applying this framework in the development of their agent-based model.

The Observer

The Observer refers everything that exists outside of the simulation environment. These are the constructs within which the simulation exists, including the theoretical constructs, in addition to the noted role of the modeller, and the environment within which the simulation will be developed. It is important that all aspects of design are considered prior to continuing with model development, and should ensure the modeller is taking the right steps in choosing to develop an ABM in lieu of other approaches.

In outlining the Observer, one should consider each of the following elements, answering each associated question, noting particularly where inherent uncertainty exists.

- Mission Statement: What is the fundamental process one is seeking to model? Why is ABM being used to simulate this process? Do alternative models exist that ABM will demonstrate a significant advantage over?

- Measuring Accuracy: How is the process under investigation ordinarily measured? Over what distribution and variance do these measurements lie? What are the accuracy of these existing measurements? In view of existing measures of the process, what outputs will be generated during the simulation process? How will the simulation outputs be related directly to real-world measures? What statistical tests will be carried out to measure model accuracy?

- Software: Which software package will be used for the construction of the simulation? Can the model be developed using generic terms or is a modelling framework required? If so, which specific features are sought from a modelling framework? What are the modeller’s existing programming skill set? How feasible is an extension of this skill set within the time frame allowed for the development of this model?

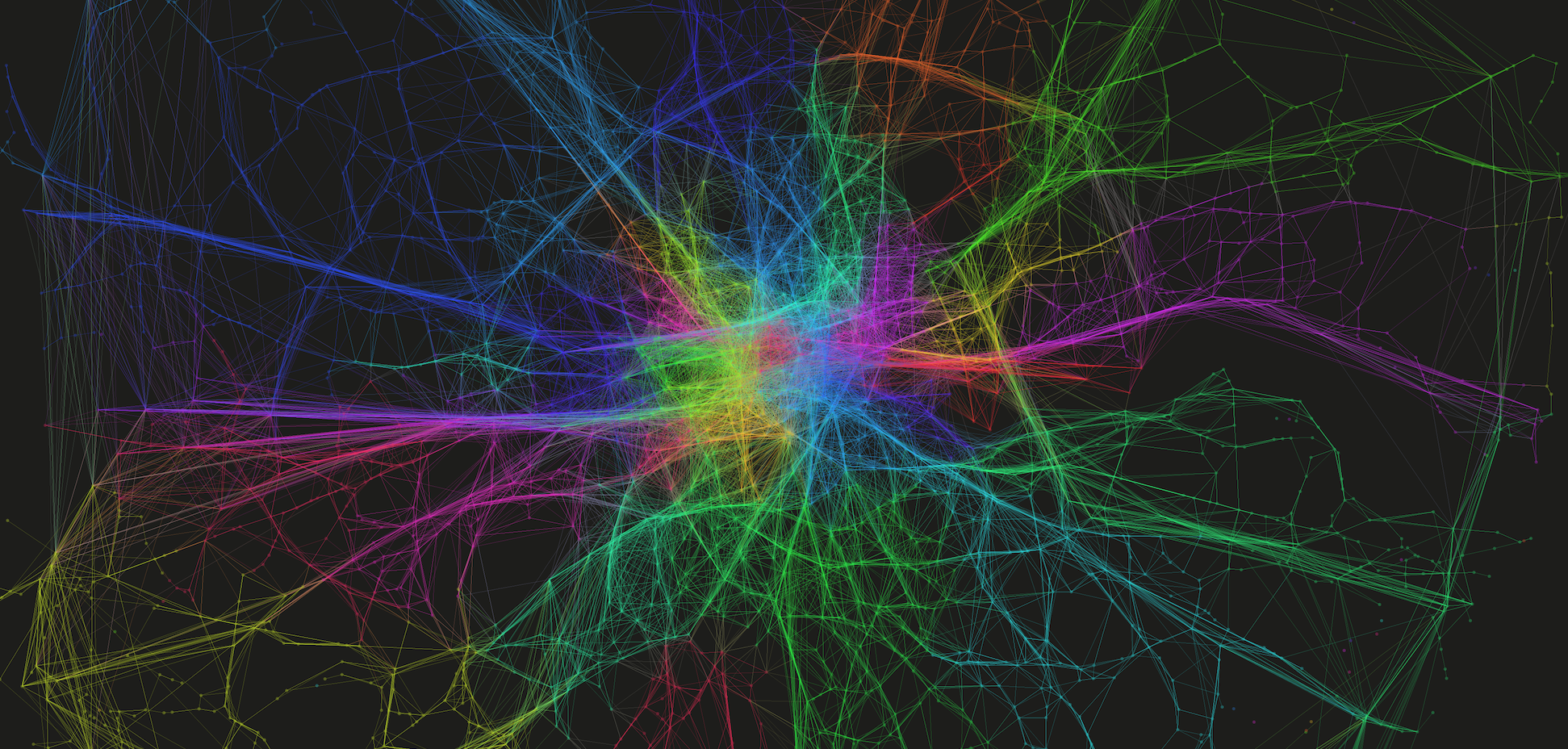

- Visualisation: Who is the audience for this model? Is the real-time visualisation of results important? What purpose does the visualisation hold? How much increased computation will the visualisation require (particularly where one is considering 3D animation)?

- Bias: From what perspective is the model being developed? What is the background of the modeller? What are the natural assumptions being built into the model? How can the influence of modeller bias be removed from the approach, to the greatest extent possible?

The World

The World refers to the modelled environment within which the simulation will take place. The World does not only encompass the physical constraints of the model, but also the global rules that define the behaviour of all objects within it. This definition should only be made in relation to the specifications made during the Observer definitions, and not with respect to Agent design.

Once more, a number of design aspects must be considered at this stage, only within the context of those design decisions made during the Observer specification. Each design aspects again presents a number of questions that must be answered.

- External Systems: Are there any interacting systems that interact with the process in question, but will not be modelled explicitly? What are the nature of these interactions? How will these external systems be encapsulated and represented within the model?

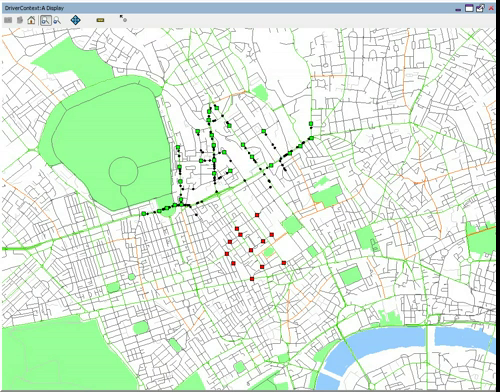

- Space: Are spatial interactions important within the process being examined? If so, within what kind of space do these interactions occur – geographic, continuous, gridded, topological? Which data sets are required to aid in the definition of the space?

- Time: Over what time period will the process be analysed? How long will one time step represent? Will a time step correspond to a real-world description of time?

- Physical Rules: What physical rules shaping the actions of all within the World require explicit definition?

The Interactions

The specification of the Interactions does not refer to defining of agents themselves, but rather how Agent-to-Agent interaction occurs. These definitions refer to the physical and collective rule sets that organise interactions. It is the manifestation of these interactions within the simulation, that may form the representation of the process under examination, thus any definitions must be made within the contexts described during the Observer and World definitions.

The elements of Interactions design are as follows, with the relevant design questions outlined. References to higher level definitions are made where necessary consideration is required.

- Physical Interactions: Do the agents physically interact across the space defined within the World? Under what circumstances do these interactions occur? What is the impact of these physical interactions? Is there a product of the interaction? How do such interactions impact upon the agents themselves or upon other external systems? Are there any specific social constraints governing these interactions? What are the temporal considerations of these interactions in relation to the time definitions made within the World? How are these interactions recorded?

- Communication: Is there communication between agents? How do these communications occur? Through which medium? Are there any additional communications between agents and external systems? Are there any particular social rules governing communication? What are the temporal considerations of these interactions in relation to the time definitions made within the World? How are these interactions logged?

- Resource Exchange: Do agents exchange resources in any way? How do these interaction occur? What is lost and what is gained by through each interaction? How do these interactions manifest themselves over space and time? How are these interactions recorded?

The Agent

The design of the Agent is all about capturing the characteristics, actions, and decisions that influence their interactions with other agents and the wider environment. It is ultimately these behaviours that shape how the overall process is modelled, and how it evolves over space and time. It is therefore vital that, only once each level within the design hierarchy is completely specified – once all higher level entities have been fully understood, broken down and incorporated within the model – should the modeller start explicitly considering the Agent design process. For only within the context of the prior specifications, outlining completely the environment and conditions in which the Agent exists, can an Agent be fully described.

Once one has defined this environment, the definition of the Interactions involved in the simulation should proceed naturally. During this final process, the following design considerations should be examined in detail.

- Characteristics: What are the characteristics of the agents? Can agents be assigned to different profiles? What are the core traits that are required for specification of an agent’s actions? How do these properties vary across the population of agents?

- Decisions: What decisions are made by the agent (or type of agent) during the course of the simulation? What information sources are used during the formation of this decision? Through which type of mechanism are these decisions formed? How long do decisions take to make? Are decisions formed in consultation with other agents over a framework of communication?

- Actions: What actions does an agent, or type of agent, conduct during the simulation? How are these actions influenced by the agent’s characteristics? Are these actions directly relevant in simulating the process one wishes to examine? How are these manifested across the simulation space? How often do these actions take place relative to the temporal evolution of the simulation? Under what wider constraints (e.g. physical, moral framework) are these actions shaped?